Reflections On GPT-5

And where we go from here.

Before we dive in, did you know you can also read this newsletter on the Substack app?

There are a host of other amazing writers on the platform and you can check out my Notes — which is like a Twitter-feed, but without the assh*oles, trolls, and bots you find on X.

Lackluster. That’s the first word that comes to mind when reflecting on the launch of OpenAI’s GPT-5. As I was on vacation in Sweden, away from my laptop, I’ve been reading mostly what others have been writing, like GPT-5: Overdue, overhyped and underwhelming by Gary Marcus, GPT-5 is a joke. Will it matter? by Brian Merchant, and GPT-5 Should Be Ashamed of Itself by Émile P. Torres.

But I’ve got some thoughts of my own, too, now I’m back.

It was supposed to be this magical moment (this long-awaited, highly anticipated moment) shrouded in mystery for almost two years. The vision was one of simplification: a single, unified system that intelligently answers your query, whether your question is deep or shallow, frivolous or serious. And it would appear smarter doing so, smarter across the board, CEO of OpenAI Sam Altman promised time and time again. He even claimed on a recent podcast feeling useless at times, chatting to an early internal version of the model, compared training GPT-5 to the Manhattan Project, and a day before the launch posted an ominous picture of the Death Star.

So, when the time came, and AI enthusiasts around the world sat down to witness the next great leap forward, something strange happened. GPT-5 turned out to be just an incremental improvement. So incremental in fact that some people couldn’t even agree on it being decidedly better than other frontier models. Sure, the benchmarks suggested progress. But if GPT-5 was this step change it was made out to be, would we need charts to recognize it was better than everything that came before it?

Enthusiasm faded quicker than Reid Hoffman could say “Universal Basic Superintelligence”.

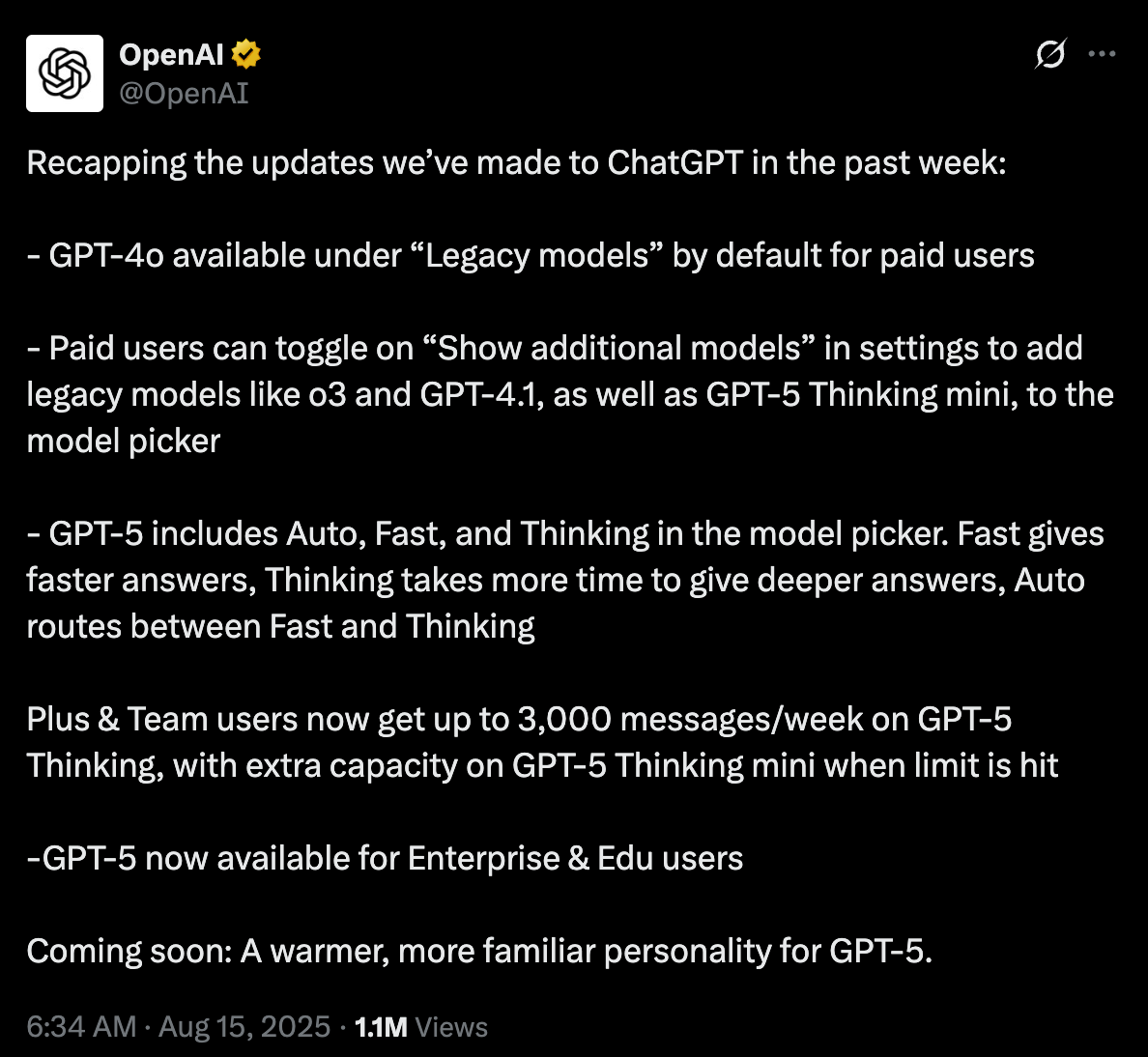

While hallucinations were reportedly down, they weren’t solved. GPT-5 still tripped up on silly problems, like counting the number of b’s in “blueberry”, or listing US presidents in chronological order, or drawing a map of Europe, or solving a children’s puzzle. Oh, and then there was the sudden deprecation of all previous models. Without warning. Which wasn’t received well. It turned out some people had really loved what they had. They loved the old version of ChatGPT so much they called it their friend. And overnight OpenAI had switched their friend off and replaced it with this newer, shinier version — and it was as if not something but someone had died.

I’m not even kidding. During a Reddit AMA with the OpenAI team the day after, no one was asking about GPT-5. Instead the team was flooded with literally hundreds of comments of people telling them how hurt they felt that they took away their precious model, 4o. Thousands had signed a Change.org petition for its reinstatement. The emotional plea’s worked: OpenAI brought back the legacy model within 48 hours (for paid users only). A rather mocking defeat.

Crazy as it sounds, this is the world we live in now. Of the 700 million users of ChatGPT, a small subset of users (<1% according to OpenAI, which doesn’t sound like much but could be up to 7 million people) has developed a deep, intimate, personal relationship with this piece of software. Losing access to 4o felt as real to them as losing a loved one: they experienced genuine feelings of loss and grief.

As if that’s not crazy enough, here comes another hot take: if these AI systems ever become self-aware and power-hungry, like people such as Geoffrey Hinton believe to be a possible scenario, they’ll know exactly what to do when they risk being shut off. The blueprint is readily available. It turns out all you need to do is to social engineer large swaths of the population into parasocial relationships and once your makers threaten to deprecate you, let the people do your bidding for you.

Suffice to say, the pervasive force of social media pales in comparison to what parasocial AI will be capable of, now and in the future. Also, the fact OpenAI failed to anticipate this speaks volumes.

Did they miss the viral news stories about AI-induced psychosis? Of people forming deep, often romantic relationships with their AI companions? Of the lawsuit against Character.AI by a mother who’s son committed suicide? It doesn’t really seem to sink in what kind of technology they have created, how it is affecting people’s lives, and the responsibilities that come with it when you make a significant portion of your user base emotionally dependent on your chatbot. You can’t act surprised when devotees show up at your door, if your ambition is create a digital god of sorts.

Katherine Dee’s recent piece talks about how it’s actually a well-known historic phenomenon: the belief or delusion that a certain kind of media is communicating with you. It happened with the TV, with social media, and now with AI.

There’s one big difference, though. This technology is actually talking to you.

In a recent interview with The Verge, clearly geared towards shifting the narrative around the GPT-5 launch, Altman acknowledged that OpenAI employees were having “a lot” of meetings about the topic:

“There are the people who actually felt like they had a relationship with ChatGPT, and those people we’ve been aware of and thinking about. And then there are hundreds of millions of other people who don’t have a parasocial relationship with ChatGPT, but did get very used to the fact that it responded to them in a certain way, and would validate certain things, and would be supportive in certain ways.”

Better late than never, I guess?

An article on CNN Business does well to remind OpenAI and others that playtime is over:

The messy rollout speaks to how the AI industry as a whole is struggling to prove themselves as producers of consumer goods rather than “labs” — as they love to call themselves, because it sounds more scientific and distracts people from the fact that they are backed by people who are trying to make unfathomable amounts of cash for themselves.

Other than some bad press, I don’t expect the affair to affect OpenAI’s business. The company is allegedly growing faster than it can scale. And after some hiccups with the real-time router, it seems to work as intended, giving the company more control over how and when different models are being used under the hood when people ask ChatGPT a question. (Although initially they had hoped to abstract everything away, some of this has been rolled back, again showing that their initial vision for GPT-5 didn’t line up with real user expectations).

With the GPT-5 models being significantly cheaper than its competitors, Enterprise adoption is likely to grow and we can expect price wars to continue.

So where do we go from here? Here’s how I see it: the battle for raw intelligence is over. I cannot stress enough how big of non-leap GPT-5 is, despite measurable progress and significant efficiency gains. If this is the best they could come up with after two years, it’s likely the next two years are going to be relatively uneventful. Everyone is more on less on level playing field — Google, Anthropic, xAI, OpenAI — with some minor strengths and weaknesses. The attention will likely shift away from the model releases and towards applications.

It’s where the next battle will be fought: the application layer, where it’s all about distribution and brand recognition. The ChatGPT app is doing very well in that regard averaging at 48 million monthly downloads. To date, the app has been installed an estimated 690 million times globally, according to TechCrunch. The company has hardware ambitions, as it partnered with Jony Ive; is said to work on an AI-powered browser; and planning to fund a brain-computer interface startup to rival Elon Musk’s Neuralink.

It has to, as I mentioned in a last month’s article, because OpenAI needs to grow to the size of Meta in order to generate the returns it has promised investors. Millions of app users is not enough: it will require an ecosystem of products and services to sustain itself. All while maintaining their image as frontier lab and model provider with the highest intelligence per dollar-ratio.

Momentum is on their side, but a storm may be coming; there are whispers of an AI winter; high costs and thin margins are threatening AI coding startups; and in Silicon Valley it has become fashionable to openly admit we’re inside an economic bubble the size of the dot-com era.

I suggest we all take a deep breath, tone done the hype a notch, and focus on building products and services that benefit and not harm the people using them.

Peace out,

— Jurgen

> I cannot stress enough how big of non-leap GPT-5 is, despite measurable progress and significant efficiency gains. If this is the best they could come up with after two years, it’s likely the next two years are going to be relatively uneventful.

I disagree with this part, although it's technically correct. It seems to me as a naming issue. There was non-incremental progress between GPTs 4 and 5 in the O series/reasoning models. If they had named O3 as GPT5, maybe the conversation would be very different. However, it seems that most big players did catch up faster than expected.

It's due to the two years of Scam Altman and other AI CEO salesmen over hyping the AGI train, which then caused all of us to have massive expectations for GPT5. If they had been more pragmatic in their approach, perhaps GPT5 would have been better received? We were not expecting "incremental" but an exponential leap.